When test reuse across frameworks became a liability

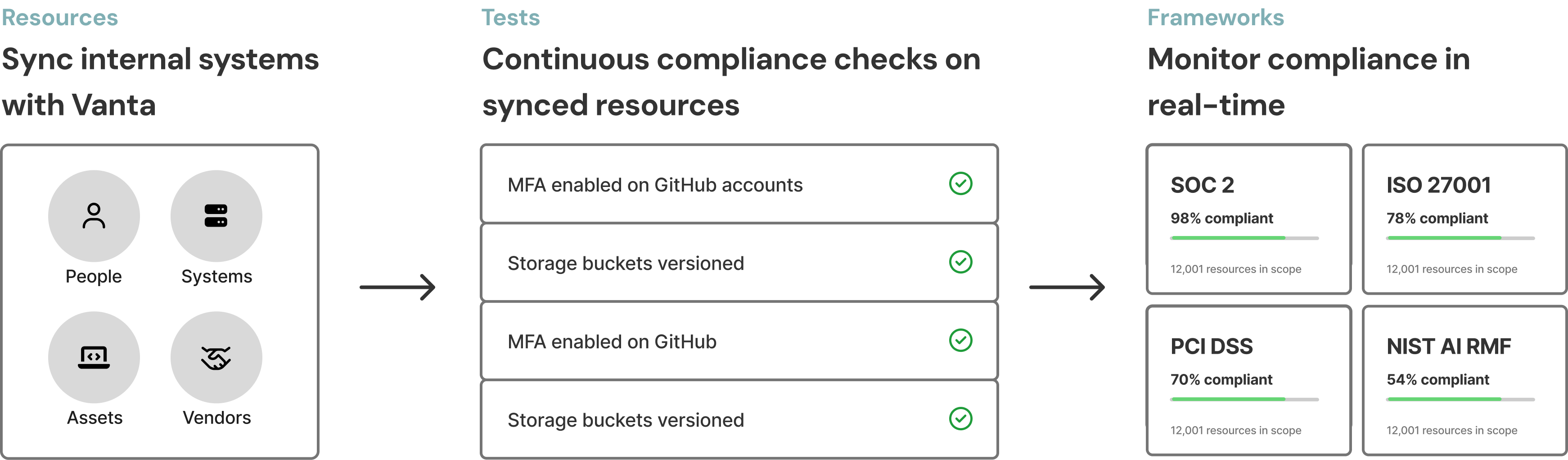

For Vanta customers, compliance runs on automated tests.

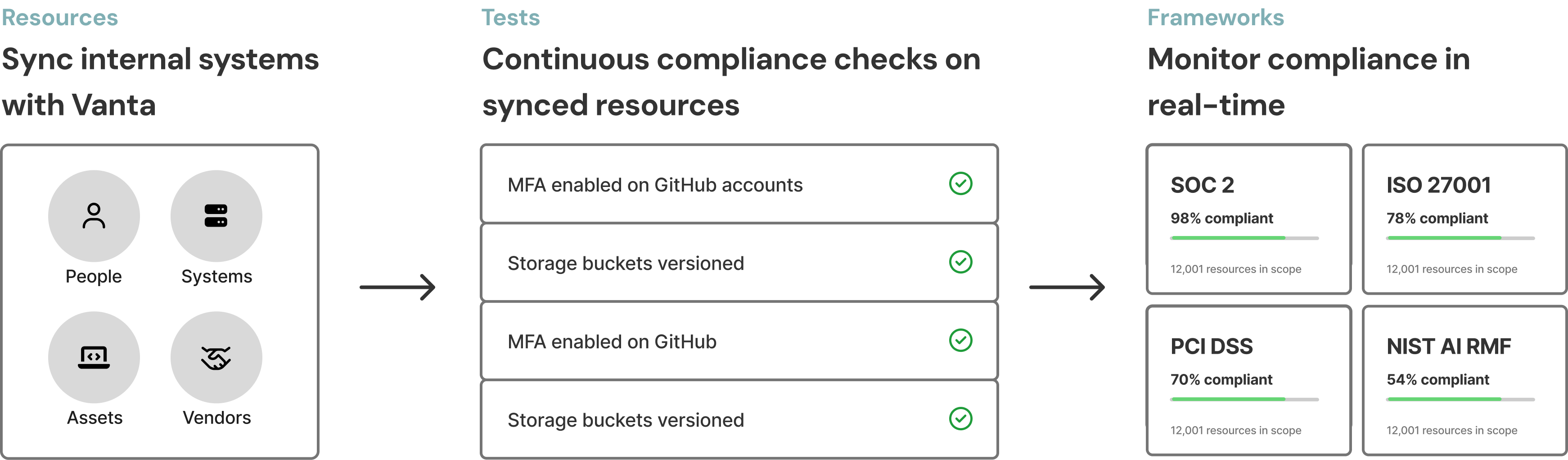

How it works, at a high level: customers connect their internal systems (such as employee accounts, cloud systems, and vendors) and Vanta continuously evaluates them against frameworks such as ISO 27001, PCI DSS, and SOC 2.

How Vanta works

Tests are the operational center of this system. One of Vanta’s key strengths is cross-framework reuse: a single test can satisfy multiple compliance frameworks.

The ability to reuse tests across multiple frameworks worked well in theory, but there was an important catch.

The problem: one test, multiple truths

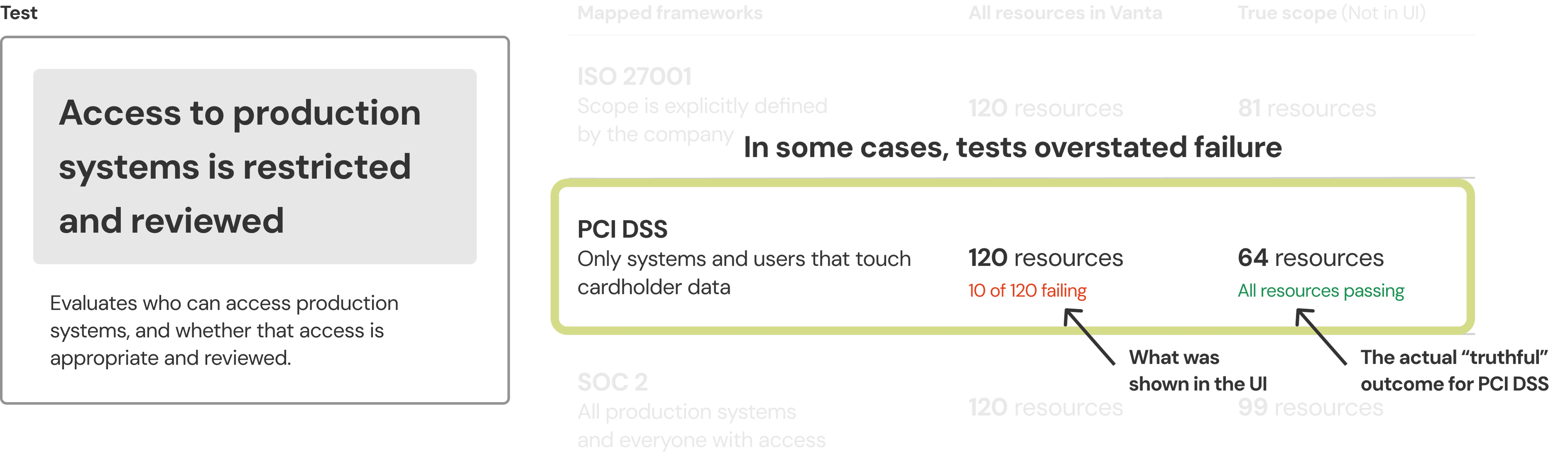

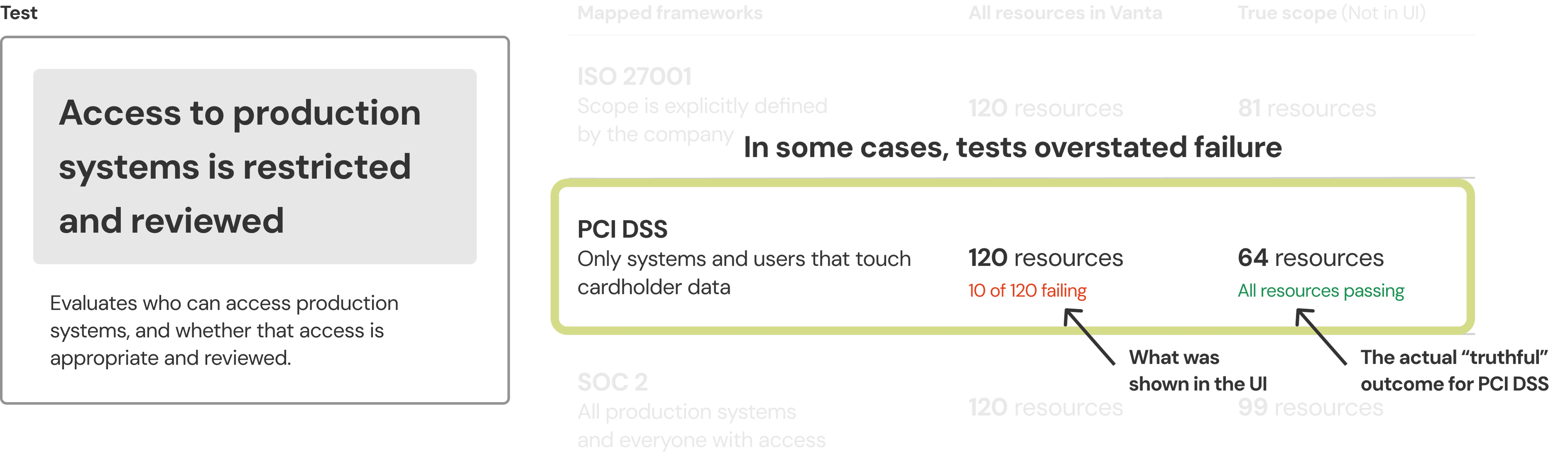

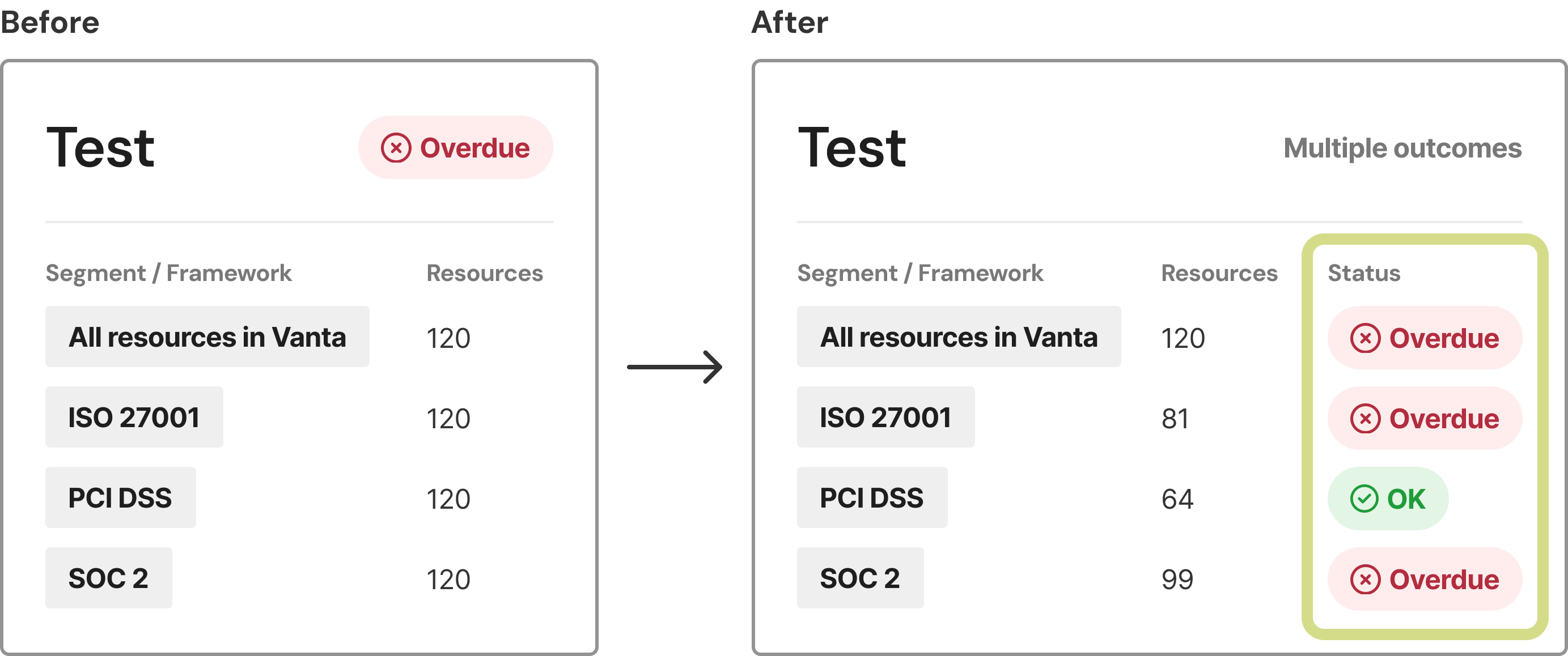

The original product model assumed a test had one status because it treated underlying resources as the same across frameworks. In reality, framework context drives the actual compliance scope.

For example:

• SOC 2 focuses on production systems and the people with access to them.

• PCI DSS is limited to systems and users that handle cardholder data.

• ISO 27001 allows companies to define a custom scope, which can vary significantly from one organization to another.

While the requirement behind the test might be the same, the systems being evaluated should differ by framework. At the time, Vanta evaluated them all against the same global scope.

During audits, customers had to manually reconcile outcomes — explaining why a failing test didn’t apply to PCI or interpreting results differently depending on the framework. That context lived in their heads, not the product.

In some cases, this led to overstated risk. A PCI audit could be fully compliant, yet the UI would display a failing test because an out-of-scope resource had triggered a failure.

This was technically accurate, but functionally misleading. Customer trust began to erode because the product did not reflect framework-specific reality.

A foundational shift: framework scoping

As I was ramping up at Vanta, the team was working on new functionality: framework scoping. This feature would now let customers explicitly define scope per framework, resolving the underlying data problem.

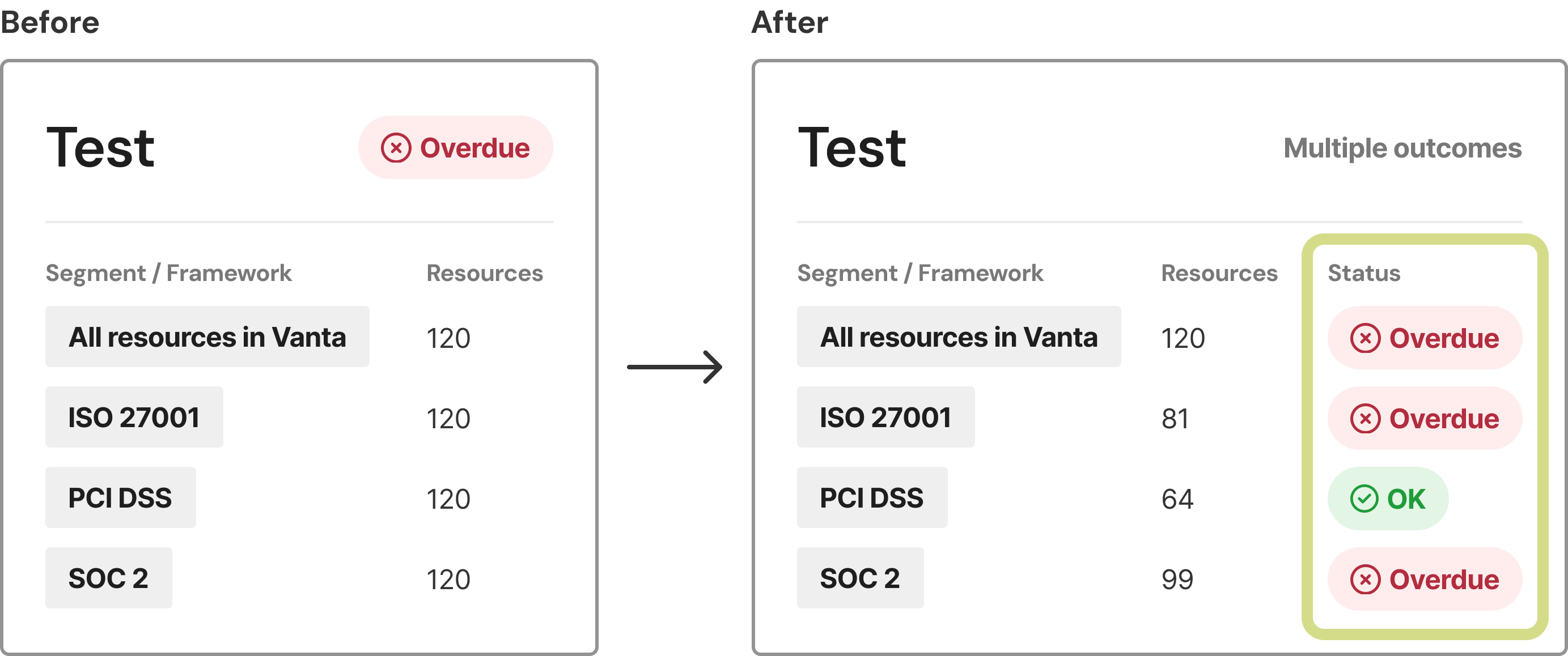

It also changed the meaning of a test: if scope varies by framework, a test can no longer have a single universal outcome.

My role was to translate this new data model into a trustworthy and usable test experience.

Tests before and after framework scoping

Understanding how customers use tests

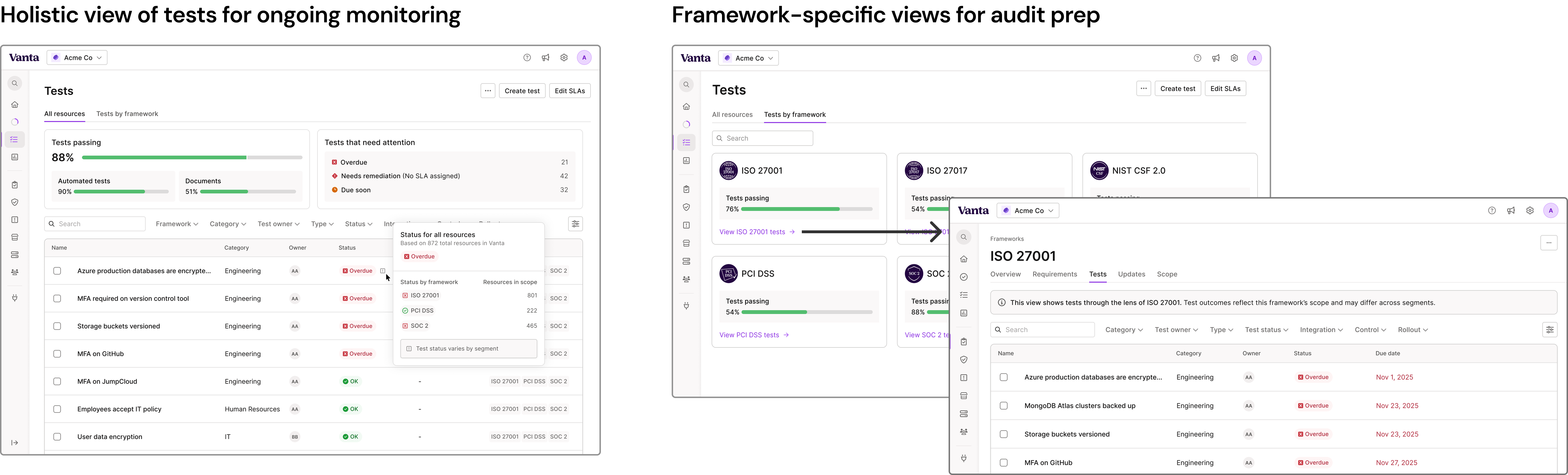

Early discovery revealed that customers do not interact with tests in a single mode. They move between distinct jobs:

• Ongoing monitoring: “Are we generally in a good place?”

• Execution: “What do I need to fix?”

• Audit preparation: “Are we ready for ISO?”

Each mode carries a different expectation of precision and context.

I quickly realized that data accuracy alone was not sufficient. The experience needed to present the appropriate level of compliance status at the right moment without overwhelming users.

Decision 1: Preserve or remove the top-level status?

With framework scoping in place, I explored two directions:

• Preserve a single high-level status

• Eliminate the global status and display framework-specific outcomes only

Preserving a top-level signal maintained scanability and familiarity, but risked oversimplification. Removing it improved precision but introduced noise and reduced clarity in day-to-day monitoring.

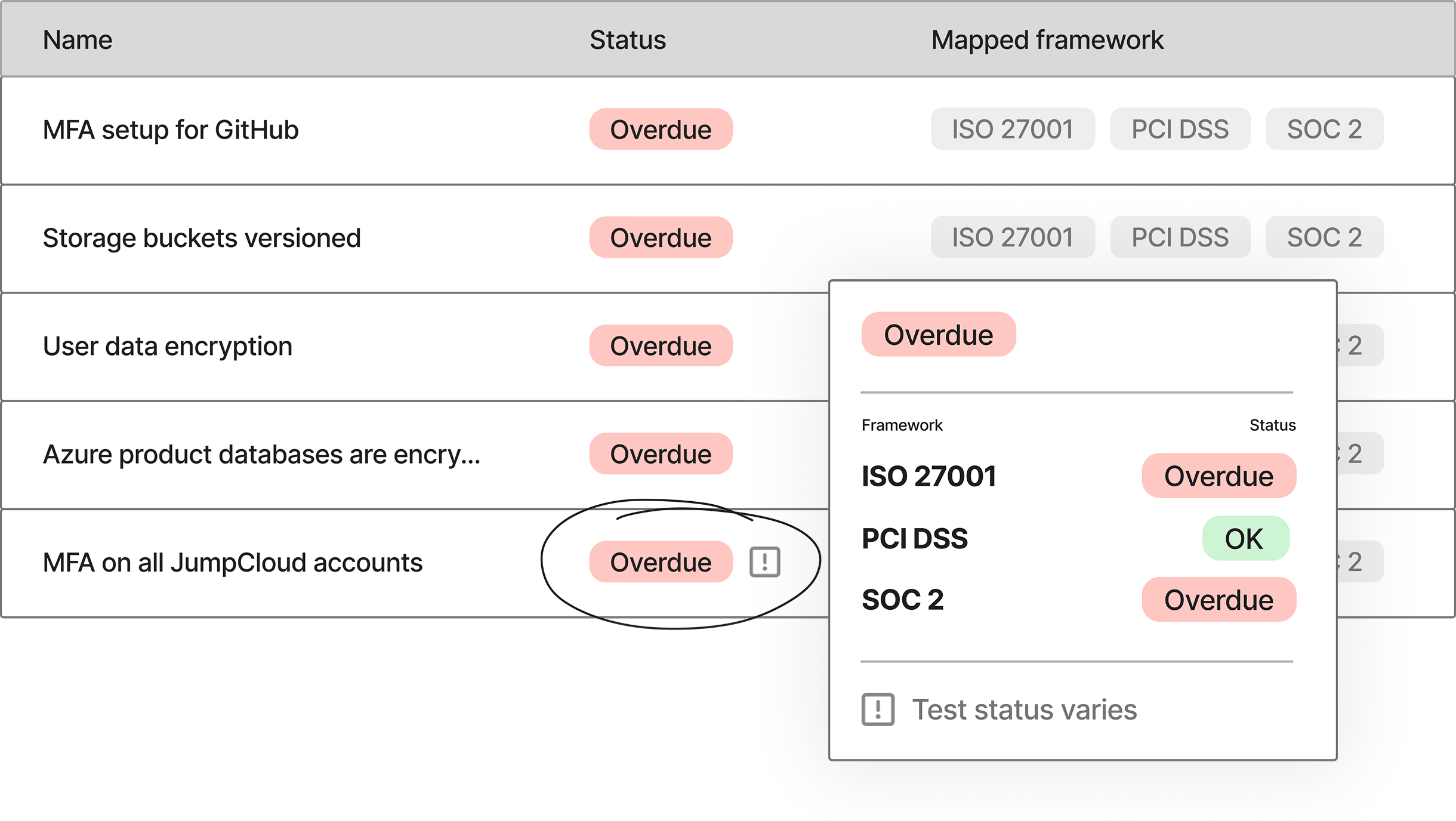

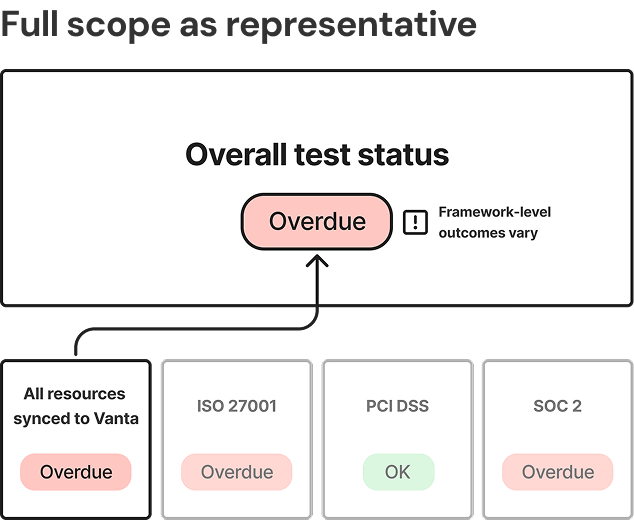

I landed on a hybrid approach: keeping the high-level status for quick comprehension, while introducing a clear indicator when outcomes varied by framework.

Decision 2: How should the overall status be calculated

Once we agreed a global status still mattered, the next question became: What should that overall status represent?

I explored three key options

1. Explicitly indicate variability

2. Display the most severe framework outcome

3. Use full-scope evaluation as a conservative proxy

Customer feedback favored displaying the most severe outcome. It aligned with their mental model: if a test is failing anywhere relevant, they want to know.

However, implementing that logic required significant changes to the existing status model.

To unblock the broader framework scoping launch, we chose an interim solution: use the original global scope as the overall proxy, while clearly communicating its meaning in the UI.

This sequencing allowed us to prioritize speed and customer value without compromising transparency. Engineering alignment ensured we could evolve toward a true aggregate model over time.

Decision 3: One surface or two?

I initially attempted to extend the existing Tests list to support framework-specific audit workflows.

Each iteration introduced tradeoffs:

• Filters subtly changed the meaning of status

• Context became easy to miss

• Or the interface became overly complex

The core insight was that audit preparation is a fundamentally different job from ongoing monitoring.

Monitoring requires synthesis and speed. Audit preparation requires precision and explicit scope.

Rather than stretching a single surface to serve both, I introduced a dedicated framework-specific test view for audit workflows. This separation clarified mental models. Monitoring remained streamlined. Audit preparation became precise and defensible.

Outcome

The final solution reduced overstated failures in framework-specific contexts, while preserving existing remediation workflows. Customers incorporated framework-specific outcomes into daily workflows with no increase in support tickets or slowdown in remediation.

More importantly, this work laid the foundation for supporting larger, more complex customers managing many frameworks and unlocked future investments in enterprise-grade scoping and segmentation.